netdata is a lightweight realtime monitoring tool. For the moment it doesn’t have any history storage, but nevertheless it’s a cool webapp, and i like the charts design.

You can try by yourself on the demo page and find more info about it on the official site.

Hardening your webserver’s SSL ciphers

If you are one of the psychorigid people that must have a straight “A” on Qualys’s SSL server test for every server, you should really bookmark this page:

https://hynek.me/articles/hardening-your-web-servers-ssl-ciphers

[Varnish] Beware of Vary header

Vary is one of the most powerful HTTP response headers. Used correctly it’s possible to do wonderful things with it. Unfortunately most people used it so badly, that today most CDN don’t use it anymore and simply avoid caching if it used for anything else than HTTP compression.

Using it for HTTP compression

Most people use the Vary header exclusively with the Accept-Encoding value in order to cache several version of the same URI depending on the Accept-Encoding field value. Usual values for Accept-Encoding are gzip, deflate and more rarely sdch, but many more exist. Also keep in mind that any combination of these individual values is valid !

To keep the number of cache version at minimum, you can use the following VCL snippet to normalize the Accept-Encoding header, only keeping gzip and deflate as acceptable values:

if (req.http.Accept-Encoding) {

if (req.http.Accept-Encoding ~ "gzip") {

set req.http.Accept-Encoding = "gzip";

} elsif (req.http.Accept-Encoding ~ "deflate") {

set req.http.Accept-Encoding = "deflate";

} else {

remove req.http.Accept-Encoding;

}

}

Using it with other field

It possible to specify any other field into the Vary value and also multiple fields by separating their names with a comma. But i don’t recommend this setup because of how CDN treat it.

Anyways, if you want to use Vary correctly you must always keep in mind these two cardinal rules: never use the raw values of the indicated field and never choose a field with too much variant.

For example the following fields should NEVER be used into a Vary value:

- Referer: because that utterly stupid

- Cookie: because that probably the most unique request header ever

- User-Agent: because with more then 8000 usual variants, you pretty to sure to never use your cache anymore

NAT traversal

In a typical IPSec setup tunnels are established between equipment having public IPs. But what happen if one or both gateway are behind firewalls doing NAT ? As you can imagine this setup simply can’t work. There is two reasons for that.

First IKE exchange can’t be successful because of how NAT translations work. The embedded address of the source computer within the IP payload can’t match the source address of the IKE packet as it is replaced by the address of the NAT device.

Secondly ESP traffic can’t be NATed because there is no port in ESP. Therefore when a client attempts to initiate an ESP connection behind a network device doing NAT, the device is unable to maintain a unique translation state with these packets.

NAT-Traversal to the rescue !

To bypass these limitations, NAT-T was created. The idea is simply to a friendly NAT protocol (UDP) to encapsulate ESP exchange. During phase 1, if NAT-T is used, IKE negotiations switch to UDP port 4500.

After the tunnel is establish, instead of sending plain ESP packets, each one is encapsuled in an UDP packets. This trick allow the device doing NAT to maintain the connection state in its table.

Further Reading and sources

[DRAC] Segfault with virtual console

Okay let be clear: i dislike Dell’s iDRAC. Sure unlike ILO there is no license to buy and the interface is pretty convenient, but why something as essential as the console access is so buggy and unreliable ?

Last example: no matter which version of Java i use on my Arch direct crash of the console at startup. After digging a little I luckily came across this link which explain everything and give this workaround :

zapan:~# vi /tmp/idracfix.c

#define _GNU_SOURCE

#include

#include

#include

void *dlopen(const char *filename, int flags) {

if (filename && !strcmp(filename, "/usr/lib/libssl.so"))

return NULL;

void *(*original_dlopen)(const char *, int);

original_dlopen = dlsym(RTLD_NEXT, "dlopen");

return (*original_dlopen)(filename, flags);

} Compile it:

gcc -Wall -fPIC -shared -o idracfix.so idracfix.c -ldlAnd for now launch javaws while setting LD_PRELOAD when using iDrac console :

LD_PRELOAD=./idracfix.so javaws viewer.jnlpInitialize an encrypted external HDD or USB key

Prerequisites

- an external hdd or usb key already partitioned

- cryptsetup

Initializing

First format the partition:

cryptsetup luksFormat /dev/<partition>Now we have a fresh new luks volume. Let’s open it:

cryptsetup luksOpen /dev/<partition> <my_alias>The volume should be available under /dev/mapper/<my_alias>

Create a filesystem inside the volume:

mkfs.ext4 /dev/mapper/<my_alias>Perfect. Now close the lurks volume:

cryptsetup luksClose <my_alias>Initialization is done. Next time you will connect your device your file manager should prompt a pop-up asking the passphrase of your key.

Further Reading and sources

[MySQL] Upgrade 5.1->5.6

In theory mysql_upgrade should do the trick but sometime it fails some step ‘silently’. Then when doing backup you will have this :

Cannot load from mysql.proc. The table is probably corruptedGasp. Don’t panic, this is a very simple error to fix. Just change the type of the ‘comment’ column :

alter table proc change comment comment text;How to check MX records

First you can check that the declaration is correct, using dig:

# dig foobar.com MX

; <<>> DiG 9.7.3 <<>> foobar.com MX

;; global options: +cmd

;; Got answer:

;; ->>HEADER<<- opcode: QUERY, status: NOERROR, id: 32034

;; flags: qr rd ra; QUERY: 1, ANSWER: 1, AUTHORITY: 0, ADDITIONAL: 1

;; QUESTION SECTION:

;foobar.com. IN MX

;; ANSWER SECTION:

foobar.com. 14400 IN MX 0 foobar.com.

;; ADDITIONAL SECTION:

foobar.com. 14400 IN A 69.89.31.56

;; Query time: 198 msec

;; SERVER: 127.0.0.1#53(127.0.0.1)

;; WHEN: Fri Jun 23 18:56:51 2017

;; MSG SIZE rcvd: 60

Next you can use an online tool, like mxtoolbox to check others requirements and make sure the server's IP isn't already included into a commonly use blacklist.

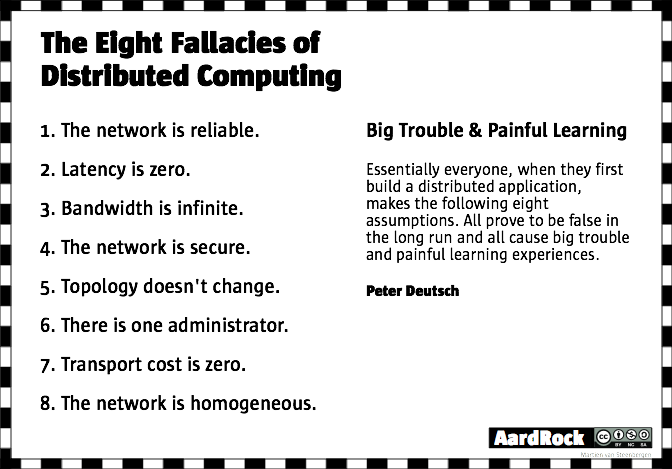

Fallacies of distributed computing

The fallacies of distributed computing are a set of assertions made by L Peter Deutsch and others at Sun Microsystems describing false assumptions that programmers new to distributed applications invariably make.

[MySQL] The query cache blues

The MySQL query cache is one of the prominent features in MySQL and a vital part of query optimization. It caches the select query along with the result set, which enables the identical selects to execute faster as the data fetches from the in memory.

So in theory its rocks. Right ?

Yep but not quite. First there is the obvious problem of changing query/data. If you frequently update the table then you invalid the query cache. If you change any parameters into the query you just don’t use the cache. In both case you’re probably not going to get any sort of good usage from the MySQL query cache.

Then there is also the problem of how the query cache implementation work on modern multi-core CPU. Simply stated mysqld wants to lock the query cache both when checking if a result is in the cache and when writing a result set into the cache. When writing locking can occur several times, because cache has to be assigned memory block by block. On a highly concurrent environment that means a lot of mutex which may become a performance bottleneck.

For these reason, the query cache is disabled since MySQL 5.6

If you really want/need to re-enable it, just set a value for the query_cache_limit.

Further Reading and sources